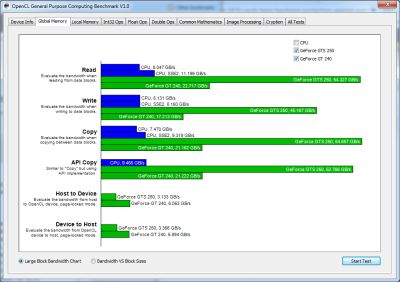

OPENCL BENCHMARK UCSD VERIFICATION

Joined NVIDIA as PCIe Verification Team Intern.Īppointed Head of Department of Technical Arts for ATMOS Technical Fest Left NVIDIA as PCIe Verification Team Intern to finish graduation. Worked under Prof Soumya J in Fault Tolerant Routing for Network-on-Chips.

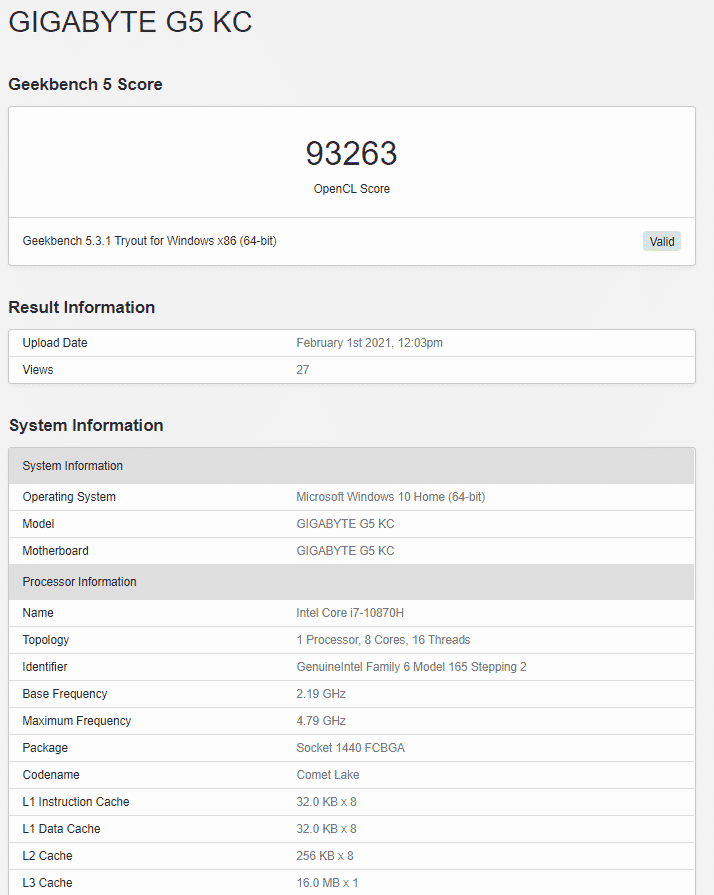

OPENCL BENCHMARK UCSD FULL

Joined NVIDIA as full time Engineer in the PCIe Verification Team. Promoted to Senior ASIC Engineer at NVIDIA. Last day of working (6 Aug) at NVIDIA before I left for Masters.Ĭompleted my 3 years working as a full-time Engineer at NVIDIA. Teaching Assistant for CSE 140: Digital Systems under Prof Tajana Simunic Rosing. Teaching Assistant for CSE140L: Digital Systems Laboratory under Prof Bryan Chin. I also Interned at Ethnus Consultancy in the backend team on Redis and PHP in Summer '16

OPENCL BENCHMARK UCSD ANDROID

In my junior years I worked as a Web developer freelancer and contributed to lot of projects (College fest management system, College fest websites, Startup websites, Personal projects on expense tracker, College Android application backend handling). In my sophomore year, I worked as a researcher under Professor Soumya J at BITS (Pilani), Hyderabad on Fault tolerant routing for Network-On-Chips. During my pre-final year of undergaduate studies, I interned in the PCIe team at NVIDIA Bangalore working on workflow automation, coverage closure, regression debugging and testbench fixes. I delved in designing and verifying PCIe transaction layer features for multiple generations of protocol.īefore working full-time I was pursing my Bachelors at BITS (Pilani), Hyderabad in Electrical and Electronics Engineering. Prior to joining graduate school, I have worked in industry for 3+ years at NVIDIA within their PCIe hardware team at Bangalore Office.

I am mainly interested in Computer Architecture involving CPU/GPU architectures, Network-On-Chips, Processor Design. Īlongside my masters I am also a Research Assistant under Processor Tajana Rosing I am currently doing summer internship at Qualcomm. Workshop on automatic performance tuning.

Evaluating performance and portability of OpenCL programs. OpenCL Performance Evaluation on Modern Multi Core CPUs. A portable- and high-performance matrix operations library for CPUs, GPUs and beyond. Improving Performance Portability in OpenCL Programs. CUDA Occupancy Calculator NVidia, 2009.The scalable heterogeneous computing (SHOC) benchmark suite. PARTANS: An autotuning framework for stencil computation on multi-GPU systems. Auto-tuning a high-level language targeted to gpu codes. An experimental study on performance portability of OpenCL kernels. A static task partitioning approach for heterogeneous systems using OpenCL. The Architecture and Evolution of CPU-GPU Systems for General Purpose Computing. Scalability and Parallel Execution of OmpSs-OpenCL Tasks on Heterogeneous CPU-GPU Environment. OmpSs-OpenCL Programming Model for Heterogeneous Systems. Heterogeneous Computing with OpenCL Morgan Kaufmann. Kepler Architecture - White Paper Google Scholar.In addition, the Auto-Tune tool integrated with OmpSs-OpenCL offered a maximum performance gain of 10% for Matmul workload at runtime. We evaluate our proposal on 7 different benchmarks with varied characteristics ported across three different GPU architectures. The proposed tool focuses on modifying the OpenCL kernel execution configuration based on GPU specifications and does not require any user intervention providing reasonable performance portability. In this paper, we propose an Auto-Tune tool for OmpSs-OpenCL kernels to ease the process of porting applications across different generations of GPUs. There is, however a lack of tool-chain support that can help with porting applications across GPUs with myriad computing capabilities. In this light, performance portability of applications across different generations of GPUs with minimal programmer intervention, is desired. The semiconductor industry has enabled a continuous increase in computing capabilities of GPUs across successive product generations. Perceived benefits of the heterogeneous computing models have led to GPUs becoming an indispensable part of existing/future HPC infrastructures.